Can you tell when someone is lying to you? Are there certain characteristics in the face that may indicate when someone is not being truthful? Can you detect who is lying in the above video?

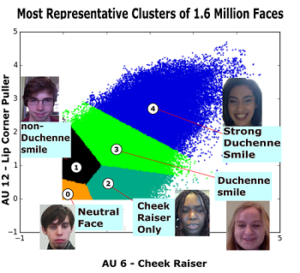

Is there a chance that a computer can aid a human in detecting deceptive behavior? In our study where we have only examined the facial features associated with a smile, we have found that deceptive individuals expressed higher frequencies of AU12 (lip corner pull) and AU06 (cheek raiser). Are there other facial features that could indicate deception? Could you combine linguistic characteristics to aid in lie detection? How is all this data being collected and analyzed in the first place? The papers in the following section address these types of questions and raise even more interesting ones.

Deception Dataset

If you would like access to the dataset described in [1], please fill out this form.

Paper Publications

[1] Sen, T., Hasan, M. K., Teicher, Z., & Hoque, M. E. (2018). Automated Dyadic Data Recorder (ADDR) Framework and Analysis of Facial Cues in Deceptive Communication. Proceedings of the ACM on Interactive, Mobile, Wearable and Ubiquitous Technologies, 1(4), 163

[2] Sen, T., Hasan, M. K., Tran, M., Levin, M., Yang, Y., & Hoque, M. E. (2018). Say CHEESE: Common Human Emotional Expression Set Encoder and its Application to Analyze Deceptive Communication, IEEE International Conference on Automatic Face and Gesture Recognition.

[3] Hasan, M.K., Rahman, W., Gerstner, L., Sen, T. K., Lee, S., Haut, K. G., Hoque, M. E. (2019). Facial Expression Based Imagination Index and a Transfer Learning Approach to Detect Deception, 8th International Conference on Affective Computing and Intelligent Interaction (ACII 2019), Cambridge, UK, September 2019

[4] Hasan, M.K., Sen, T. K., Yang, Y., Baten, R. A., Glenn, K. G., and Hoque, M. E. (2019). LIWC Into the Eyes: Using Facial Features to Contextualize Linguistic Analysis in Multimodal Communication, 8th International Conference on Affective Computing and Intelligent Interaction (ACII 2019), Cambridge, UK, September 2019

[5] G. Naven, T. K. Sen, L. Gerstner, K. G. Haut, M. Wen, M. E. Hoque, Leveraging Shared and Divergent Facial Expression Behavior Between Genders in Deception Detection, IEEE International Conference on Automated Face and Gesture Recognition (FG), Argentina, May 2020

[6] M. Tran, T K. Sen, K. G. Haut, M. A., Ali, M. E. Hoque, Are you really looking at me? A Feature-Extraction Framework for Estimating Interpersonal Eye Gaze from Conventional Video, IEEE Transactions on Affective Computing, to appear.

Real-world impact:

The ideas from our research papers won the 1st place at the IARPA CASE — Credibility Assessment Standardized Evaluation challenge at Washington DC.

Press mentions:

https://www.newsweek.com/how-spot-liar-experts-uncover-real-signs-deception-can-you-spot-them-941954

https://www.wxxinews.org/post/ur-research-lie-detection-could-help-airports

https://www.thetimes.co.uk/article/facial-software-knows-if-you-have-something-to-hide-wsjps63r0